Artificial intelligence is fundamentally changing how intensive care units handle clinical documentation. AI ICU documentation tools — from large language models that generate discharge summaries to algorithms that calculate severity scores in real time — are addressing one of critical care medicine’s oldest problems: the gap between the care delivered at the bedside and the records that capture it. This guide examines how AI critical care technology works, where it is being deployed today, and what ICU teams need to know before adopting it.

The Documentation Burden in Critical Care

ICU clinicians operate in one of the most documentation-intensive environments in medicine. A single critically ill patient generates hundreds of data points per day: continuous vital signs, ventilator parameters, vasopressor doses, fluid balance, laboratory results, imaging, microbiology cultures, medication changes, procedure notes, family counselling records, and clinical assessments.

Despite this volume, the final documentation — the discharge summary, the severity scores, the clinical narrative — is still overwhelmingly produced manually. Studies consistently show the scale of this burden:

| Documentation Metric | Finding |

|---|---|

| Time spent on documentation per ICU shift | 40–50% of total physician time |

| Average time to complete one ICU discharge summary | 1.5–3 hours |

| Documentation-related errors per 100 ICU admissions | 15–25 (incompleteness, omissions, inconsistencies) |

| Physician burnout attributed partly to documentation | 55–60% of intensivists report this |

The consequences extend beyond physician fatigue. Incomplete documentation leads to rejected insurance claims, weakens medicolegal defence, undermines continuity of care during handoffs, and degrades the data available for quality improvement and research.

The documentation problem in critical care is not a knowledge problem — clinicians know what needs to be recorded. It is a workflow problem: the information exists in scattered, unstructured forms across monitors, lab systems, nursing charts, and physician memory. AI’s primary value is in bridging that gap — synthesizing structured data into coherent clinical narratives.

Why Critical Care Documentation Is Uniquely Difficult

Several features make ICU documentation harder than documentation in other clinical settings:

- Temporal density. A ward patient may have one set of vitals and one medication change per day. An ICU patient may have dozens of clinically significant data points per hour.

- Multi-system involvement. Critically ill patients typically have dysfunction across multiple organ systems simultaneously, requiring documentation of parallel clinical trajectories.

- High-stakes decisions. Medication doses in critical care (vasopressors, sedation, insulin infusions) are titrated continuously. Each change may need documentation for safety, billing, and legal purposes.

- Shift-based care. Unlike outpatient settings where one physician manages the full encounter, ICU care involves multiple handoffs. Each transition is an opportunity for information loss.

- Medicolegal exposure. ICU patients have the highest mortality rates in the hospital. Every clinical decision is potentially subject to retrospective scrutiny. Documentation standards are correspondingly higher.

For a deep exploration of what courts and regulators expect from ICU records, see our complete guide to medicolegal documentation in ICU practice.

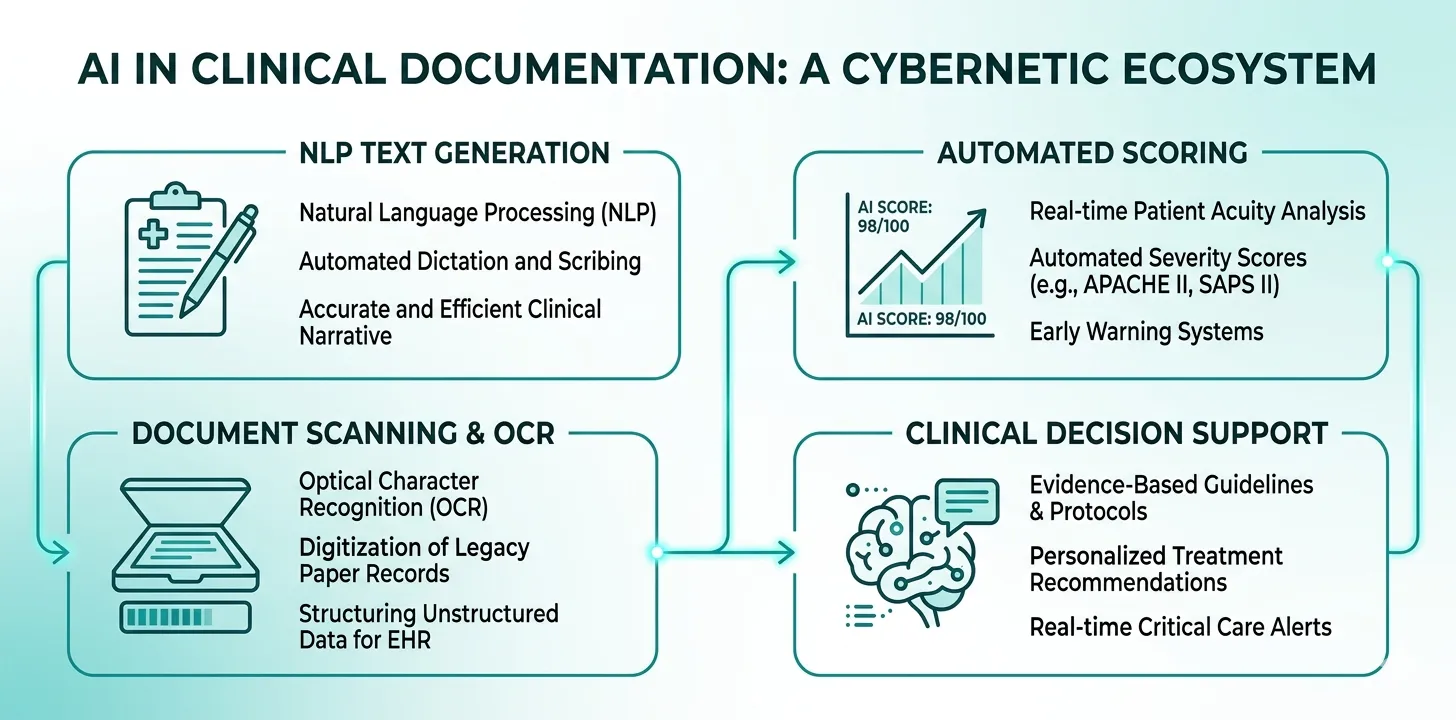

How AI Is Being Applied in ICU Documentation Today

AI in clinical documentation is not a single technology. It is a collection of techniques — each suited to different parts of the documentation workflow. Understanding these categories helps ICU teams evaluate which tools offer genuine value versus marketing hype.

Natural Language Processing and Large Language Models

Natural language processing (NLP) encompasses the ability of AI systems to understand, interpret, and generate human language. In ICU documentation, NLP is applied in two directions:

Structured-to-narrative (generation). Given structured clinical data — vitals, lab values, medication lists, procedure logs — an LLM generates a coherent clinical narrative. This is how AI-generated discharge summaries work. The model takes discrete data points and produces prose that reads like a physician-authored document.

Narrative-to-structured (extraction). Given unstructured clinical text — free-text progress notes, consultant letters, referral documents — NLP extracts structured data elements: diagnoses, medications, allergies, procedures.

Modern large language models (GPT-4, Gemini, Claude) have dramatically improved the quality of both directions. Earlier NLP systems relied on rigid templates and pattern matching. Current LLMs can handle ambiguity, medical terminology, and the kind of nuanced clinical reasoning that characterises ICU documentation.

The critical distinction is between AI that generates text from structured inputs (high reliability, auditable) and AI that interprets unstructured text (useful but requires more verification). The safest clinical AI documentation systems use the structured-to-narrative approach, where every statement in the output can be traced to a specific data input.

Clinical Scoring Automation

Severity scoring systems — SOFA, APACHE II, GCS, SAPS — are fundamental to ICU documentation. They quantify illness severity, guide clinical decisions, justify resource utilisation to payers, and serve as objective evidence in medicolegal proceedings.

Yet manual severity scoring is error-prone and time-consuming. Calculating a SOFA score requires pulling data from six organ systems — PaO₂/FiO₂ ratio, platelet count, bilirubin, cardiovascular status with vasopressor doses, GCS, and creatinine with urine output. Doing this daily for every patient in a busy ICU is a significant burden.

AI-powered scoring automation works by:

- Accepting structured clinical inputs (lab values, vitals, medication doses) entered during routine documentation

- Applying the scoring algorithm automatically — with the exact thresholds defined by the original scoring system

- Tracking scores over time to show disease trajectory

- Embedding the scores and their supporting data into the clinical narrative

This is not machine learning in the traditional sense — it is algorithmic automation guided by well-defined clinical rules. But it eliminates calculation errors, ensures daily scoring consistency, and creates a documented severity trajectory that is invaluable for both clinical and administrative purposes.

Document OCR and Data Extraction

In many healthcare settings worldwide, clinical data still exists on paper: handwritten nursing charts, printed lab reports, referral letters, consent forms. Optical character recognition (OCR) combined with AI post-processing can digitise these documents and extract structured data.

Modern OCR systems can:

- Read handwritten clinical notes with reasonable accuracy (80–95% depending on handwriting quality)

- Parse printed laboratory reports and extract individual values

- Identify and extract key fields from structured forms (medication charts, fluid balance sheets)

- Handle photographs of documents taken with smartphone cameras

OCR accuracy for handwritten clinical notes varies significantly. Legible, consistent handwriting may achieve 90%+ accuracy. Rushed, abbreviated ICU notes with medical shorthand can drop below 70%. Any AI system using OCR for clinical data must include a human verification step before the extracted data enters the medical record.

Clinical Decision Support

While not strictly a documentation tool, AI-powered clinical decision support (CDS) intersects with documentation in important ways. CDS systems can:

- Flag documentation gaps in real time (“GCS not recorded for today’s daily note”)

- Suggest differential diagnoses based on documented clinical findings

- Alert clinicians to trends that may not be apparent from individual data points (e.g., gradual decline in P/F ratio over 72 hours)

- Recommend guideline-concordant documentation for specific clinical scenarios (sepsis bundles, ventilator weaning protocols)

The documentation benefit is indirect but significant: CDS prompts lead to more complete, more timely clinical records.

AI-Generated Discharge Summaries: How They Work

The discharge summary is the single most important document produced during an ICU admission. It consolidates the entire clinical narrative — from admission through daily progress to outcome — into one document that serves clinical, administrative, insurance, and medicolegal purposes.

For a detailed exploration of discharge summary requirements and best practices, see our complete guide to ICU discharge summaries.

AI-generated discharge summaries follow a consistent pipeline:

Step 1: Structured Data Collection During the Stay

The most effective AI documentation systems do not attempt to generate summaries from unstructured historical records. Instead, they collect structured data prospectively — as part of the daily clinical workflow:

- Admission data: Demographics, presenting complaint, diagnosis, comorbidities, baseline severity scores

- Daily clinical notes: Vitals, ventilator parameters, vasopressor doses, fluid balance, GCS, medication changes, procedures, clinical assessment

- Events: Significant clinical events (cardiac arrest, new complications, emergency procedures, family counselling)

- Outcome: Discharge condition, instructions, and follow-up plan — or cause and circumstances of death

Step 2: AI Synthesis

When triggered, the AI model receives the complete structured dataset for the patient’s ICU stay. Using this input, it generates a coherent clinical narrative that:

- Presents the admission context and clinical indication for ICU care

- Narrates the clinical course chronologically, highlighting key turning points

- Documents all procedures with indications and outcomes

- Includes severity score trajectories (SOFA, APACHE II) with supporting data

- Summarises medication history including antimicrobial therapy, vasopressors, and sedation

- Documents complications and their management

- Concludes with the clinical outcome and, for survivors, the discharge plan

Step 3: Physician Review and Finalisation

The AI-generated summary is presented to the attending physician for review. The physician can:

- Accept the summary as generated

- Edit specific sections for clinical accuracy or nuance

- Add clinical context that was not captured in the structured data

- Approve and sign the final document

The physician review step is non-negotiable. AI-generated summaries should be treated as high-quality first drafts that require clinical verification — not as autonomous final documents. The treating physician remains the author of record and bears responsibility for accuracy.

What if your daily notes wrote the discharge summary for you?

Rivara Health collects structured clinical data during the ICU stay and uses AI to generate complete discharge summaries — in under 2 minutes. The physician reviews, edits, and approves.

Clinical Scoring Automation: SOFA, APACHE II, and GCS

Manual calculation of severity scores is one of the most tedious and error-prone tasks in ICU documentation. AI and algorithmic automation eliminate both problems.

SOFA Score Automation

The SOFA score assesses six organ systems, each scored 0–4, for a maximum of 24. Automated SOFA scoring works by mapping structured daily inputs to the SOFA criteria:

| Organ System | Required Input | AI/Algorithm Role |

|---|---|---|

| Respiratory | PaO₂, FiO₂ | Calculates P/F ratio, assigns score 0–4 |

| Coagulation | Platelet count | Maps to threshold, assigns score |

| Hepatic | Total bilirubin | Maps to threshold, assigns score |

| Cardiovascular | MAP, vasopressor type and dose | Applies complex vasopressor-dose logic |

| CNS | GCS (E+V+M) | Maps total to score |

| Renal | Creatinine, 24h urine output | Takes higher of two criteria |

The cardiovascular component is particularly valuable to automate because it requires matching vasopressor type and dose against specific thresholds — a calculation that is frequently done incorrectly when performed manually.

APACHE II Score Automation

The APACHE II score is calculated once — using the worst values from the first 24 hours of ICU admission. It incorporates 12 physiological variables, an age adjustment, and a chronic health evaluation. Automated APACHE II scoring:

- Identifies the worst (most abnormal) value for each variable within the first 24 hours

- Applies the correct point assignments (which vary per variable and use non-linear scales)

- Adds age points and chronic health points

- Produces the total score with a predicted mortality estimate

GCS Automation and Tracking

Glasgow Coma Scale scoring is algorithmically simple but clinically nuanced. Automated systems accept component inputs (Eye, Verbal, Motor) and calculate the total, while flagging important clinical context — such as whether the patient was sedated, intubated (which confounds the Verbal component), or had pre-existing neurological deficits.

Automated scoring does not replace clinical judgment in interpreting scores. A SOFA score of 12 means different things in a previously healthy 30-year-old with acute sepsis versus an 80-year-old with pre-existing multi-organ dysfunction. The AI calculates; the clinician contextualises.

Document OCR and Data Extraction in Clinical Settings

Many ICUs worldwide still operate in partially paper-based environments. Even in facilities with electronic health records, certain data sources remain analogue: handwritten nursing charts, printed external lab reports, referral letters from other facilities, and consent forms.

AI-powered OCR bridges this gap by converting physical documents into structured digital data.

How Clinical OCR Works

- Image capture. A clinician photographs the document using a smartphone or tablet camera.

- Pre-processing. The AI corrects for orientation, lighting, and perspective distortion.

- Text recognition. OCR extracts the text content from the image.

- Contextual interpretation. An NLP layer interprets the extracted text in a clinical context — distinguishing, for example, between a creatinine value and a bilirubin value based on position and labelling.

- Structured output. The extracted data is presented in a structured format for verification and integration into the clinical record.

Accuracy Considerations

| Document Type | Typical OCR Accuracy | Clinical Usability |

|---|---|---|

| Printed lab reports (standard format) | 95–99% | High — reliable for automated extraction |

| Typed referral letters | 90–95% | Good — requires spot-checking |

| Legible handwritten notes | 80–90% | Moderate — requires full verification |

| Rushed/abbreviated handwriting | 60–75% | Low — human transcription may be faster |

| Photographs in poor lighting | 70–85% | Variable — depends on image quality |

For OCR to be clinically useful, the verification workflow must be efficient. The best systems present extracted data alongside the original image, allowing the clinician to confirm or correct each value in context rather than re-reading the entire document.

Quality and Accuracy: How Good Is AI Documentation?

The central question for any clinical AI tool is accuracy. In documentation, errors are not merely inconvenient — they can affect patient safety, insurance processing, and legal defence.

What the Evidence Shows

Published evaluations of AI-generated clinical documentation report encouraging but nuanced results:

Factual accuracy. When generating from structured inputs, LLM-based documentation systems achieve factual accuracy rates of 95–98%. Errors typically involve minor temporal sequencing issues or phrasing preferences rather than incorrect clinical facts.

Completeness. AI-generated summaries are often more complete than manually written ones. A structured input system ensures that every documented data point is included in the output — whereas manual summaries are subject to recall bias and time pressure.

Clinical appropriateness. The language, terminology, and clinical reasoning in AI-generated summaries are generally rated as appropriate by physician reviewers. Modern LLMs trained on medical corpora produce text that reads naturally to clinicians.

Known limitations:

- Hallucination. LLMs can generate plausible-sounding but fabricated clinical details. This risk is substantially reduced when generating from structured inputs (versus free-text interpretation), but it is not eliminated.

- Nuance and judgment. AI cannot replicate the clinical judgment that a physician applies when deciding what to emphasise, what to contextualise, and what to de-emphasise in a clinical narrative.

- Atypical presentations. For standard clinical trajectories, AI performs well. For unusual presentations, rare diagnoses, or complex multi-system interactions, the AI may produce generic language that misses clinically important subtleties.

AI-generated clinical documentation must always be reviewed by the responsible clinician before it becomes part of the medical record. No current AI system is reliable enough for autonomous clinical documentation without physician oversight. Treat AI as a drafting tool, not an autonomous author.

Comparing AI and Manual Documentation

| Dimension | Manual Documentation | AI-Assisted Documentation |

|---|---|---|

| Time to produce | 1.5–3 hours per summary | 2–5 minutes (including review) |

| Completeness | Variable — depends on recall | Consistently high — uses all structured inputs |

| Factual accuracy | High (when time is available) | High (95–98% from structured data) |

| Consistency across clinicians | Low — significant variability | High — standardised output |

| Medicolegal robustness | Depends on individual documentation habits | Structured, timestamped, auditable |

| Risk of hallucination | N/A | Low but non-zero — requires review |

Safety, Privacy, and Regulatory Considerations

Deploying AI in clinical documentation raises important questions about patient safety, data privacy, and regulatory compliance. ICU teams and hospital administrators must address these before implementation.

Patient Data Privacy

Clinical documentation contains the most sensitive category of personal data. AI systems processing this data must comply with applicable regulations:

- HIPAA (United States): Protected Health Information (PHI) must be processed in HIPAA-compliant environments. Cloud-based AI services must have Business Associate Agreements (BAAs) in place.

- GDPR (European Union): Health data is a special category requiring explicit consent or a legal basis for processing. Data minimisation and purpose limitation principles apply.

- DISHA (India): The Digital Information Security in Healthcare Act (when enacted) will govern electronic health data. Current practice is guided by the Information Technology Act and the Digital Personal Data Protection Act, 2023.

- National regulations elsewhere: Australia (Privacy Act), Canada (PIPEDA/provincial legislation), UK (UK GDPR), and many other jurisdictions have specific health data provisions.

The safest architectural approach is on-device or on-premises processing where feasible. When cloud processing is necessary, ensure that the AI provider offers healthcare-grade data handling: encryption in transit and at rest, no training on patient data, data residency controls, and auditable access logs.

Regulatory Classification

AI documentation tools may be classified differently depending on jurisdiction:

- Documentation aids (generating text from physician-verified inputs) are generally not classified as medical devices in most jurisdictions.

- Clinical decision support tools (suggesting diagnoses or treatment changes) may fall under medical device regulations (FDA in the US, CE marking in the EU, CDSCO in India).

- Autonomous diagnostic tools are subject to the strictest regulatory scrutiny.

ICU teams should verify the regulatory classification of any AI tool before deployment and ensure it is being used within its intended scope.

Clinical Safety Guardrails

Effective AI documentation systems implement multiple safety layers:

- Input validation. Physiologically implausible values (e.g., heart rate of 500, SpO₂ of 120%) are flagged before they enter the system.

- Output review. Every AI-generated document is presented for physician review before becoming part of the medical record.

- Audit trails. All inputs, AI outputs, and physician edits are logged with timestamps — creating a complete provenance chain.

- Hallucination detection. Advanced systems cross-reference generated text against the structured inputs to identify statements not supported by the data.

- Version control. The original AI output and the physician-reviewed final version are both retained.

Implementation: What ICU Teams Need to Know

Adopting AI documentation tools in an ICU is not purely a technology decision. It requires workflow redesign, staff engagement, and clear governance.

Prerequisites for Successful Implementation

Structured data collection. AI documentation tools produce their best output when they work from structured inputs. ICUs that rely entirely on free-text notes will need to adopt structured daily documentation templates — capturing vitals, lab values, medication doses, procedures, and clinical assessments in discrete fields.

Clinical champion. Successful implementations are led by a clinician who understands both the clinical workflow and the technology. This person bridges the gap between the IT team and the bedside staff.

Clear governance. Define who is responsible for reviewing AI-generated documents, how errors are reported and corrected, and what happens when the AI system is unavailable.

Staff training. Clinicians need to understand what the AI can and cannot do, how to use the system effectively, and how to critically review AI-generated output.

Common Implementation Pitfalls

| Pitfall | Consequence | Prevention |

|---|---|---|

| Deploying without structured input workflows | Poor AI output quality | Implement structured templates before AI |

| Skipping physician review of AI output | Errors enter the medical record | Make review mandatory in the workflow |

| Over-reliance on AI for clinical judgment | Subtle clinical nuances missed | Train staff that AI drafts, clinicians decide |

| Ignoring data privacy requirements | Regulatory violations, patient harm | Complete privacy impact assessment first |

| No fallback for system downtime | Documentation halts during outages | Maintain manual documentation capability |

Start small. Implement AI documentation for one specific use case — such as discharge summary generation — before expanding to other areas. This allows the team to build confidence, identify workflow issues, and establish governance processes in a controlled environment.

Start with AI-powered discharge summaries — expand from there

Rivara Health's ICU documentation platform collects structured daily notes and generates complete discharge and death summaries using AI. Free to use, no installation required.

Measuring Success

ICU teams implementing AI documentation should track:

- Time savings: Average time to produce discharge summaries before and after implementation

- Completeness scores: Proportion of summaries containing all required elements (diagnosis, clinical course, procedures, medications, severity scores, complications, outcome)

- Error rates: Number of factual errors or omissions identified during physician review

- Clinician satisfaction: Self-reported documentation burden and satisfaction with AI output quality

- Insurance claim outcomes: Acceptance/rejection rates for claims submitted with AI-assisted documentation

- Audit performance: Results of internal and external documentation audits

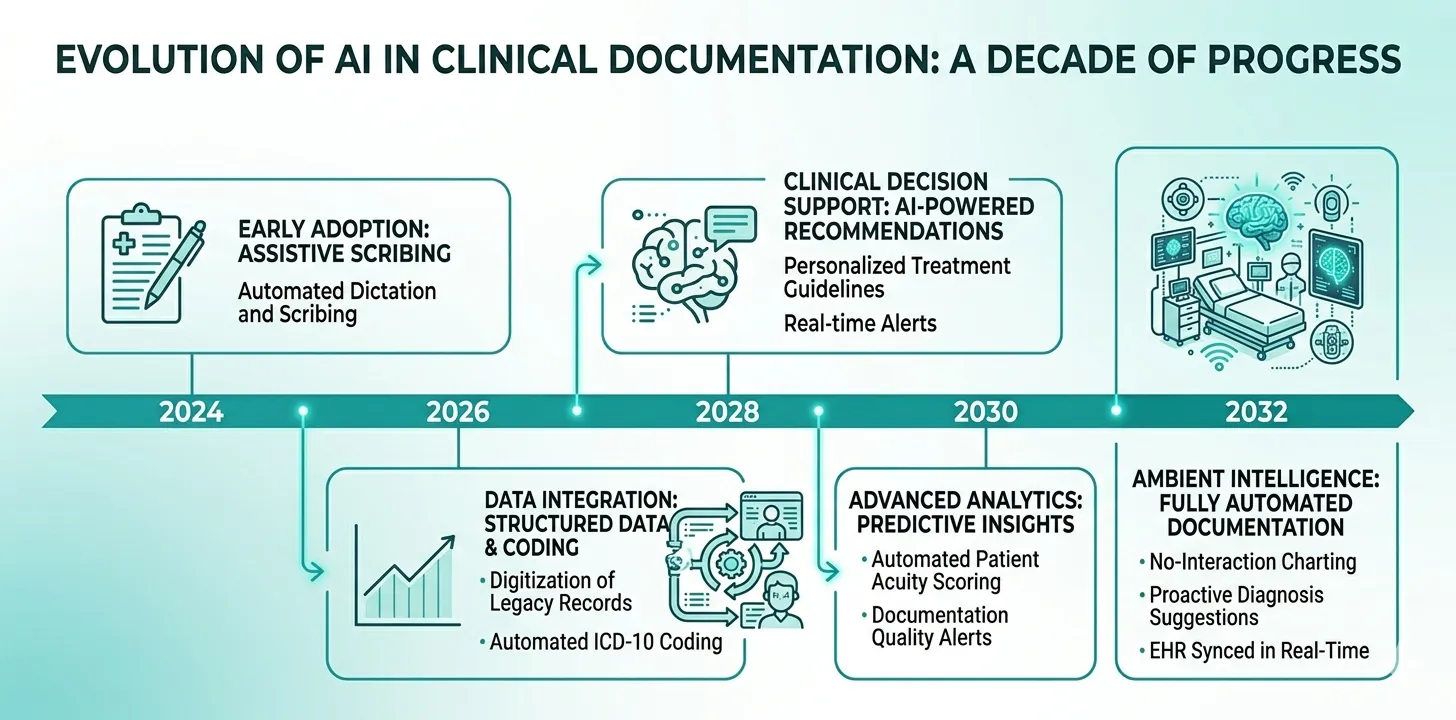

The Future of AI in ICU Documentation

The current generation of AI documentation tools is focused on generating text from structured data — a valuable but relatively narrow application. The trajectory points toward increasingly sophisticated capabilities.

Near-Term Developments (1–3 Years)

Real-time documentation. Instead of generating summaries at discharge, AI will maintain a continuously updated clinical narrative throughout the ICU stay — reflecting each new data point as it is entered.

Multimodal integration. AI systems will incorporate data from multiple sources simultaneously: structured EHR data, continuous monitoring feeds, imaging reports, and even bedside conversation (with appropriate consent).

Automated coding. AI will assign ICD-10, CPT, and DRG codes automatically from the clinical documentation — reducing coding lag and improving billing accuracy.

Predictive documentation prompts. Based on the clinical trajectory, AI will anticipate what documentation will be needed and prompt clinicians proactively (e.g., “Patient meets criteria for sepsis — consider documenting sepsis bundle compliance”).

Medium-Term Developments (3–7 Years)

Ambient clinical documentation. AI that listens to clinical conversations (ward rounds, family meetings) and generates documentation from natural speech — with clinician review and approval.

Cross-institutional learning. Federated learning approaches will allow AI systems to improve from documentation patterns across multiple institutions without sharing patient data.

Regulatory harmonisation. As AI documentation tools mature, regulatory frameworks will become more standardised across jurisdictions — reducing the compliance burden for global healthcare systems.

Long-Term Vision

Autonomous clinical narratives. AI systems that maintain a complete, real-time clinical record with minimal clinician input — functioning as an intelligent clinical scribe that captures everything happening at the bedside.

Documentation as a clinical tool. Instead of being a retrospective burden, documentation becomes a prospective clinical aid — with the AI-generated narrative surfacing patterns, risks, and opportunities that might otherwise be missed.

The long-term vision is not to remove clinicians from the documentation process but to invert the relationship. Instead of clinicians serving the documentation, the documentation serves the clinicians — providing structured, real-time clinical intelligence that makes both patient care and record-keeping better.

Key Takeaways for ICU Teams

-

AI documentation tools are ready for clinical use — particularly for structured-to-narrative tasks like discharge summary generation and automated severity scoring.

-

Structured data in, quality documentation out. The quality of AI-generated documentation is directly proportional to the quality of structured clinical inputs. Invest in structured daily documentation templates.

-

Physician review is mandatory. No current AI system is reliable enough for autonomous clinical documentation. Every AI-generated document must be reviewed and approved by the responsible clinician.

-

Start with high-value, low-risk use cases. Discharge summary generation and automated SOFA/APACHE II scoring are the most mature and immediately valuable applications.

-

Address privacy and governance early. Data privacy, regulatory classification, and clinical governance must be established before deployment — not retrofitted after.

-

Measure outcomes, not just adoption. Track time savings, completeness, error rates, and downstream outcomes (insurance approvals, audit results) to demonstrate value.

Medical Disclaimer

This article is for informational and educational purposes only. It does not constitute medical advice, diagnosis, or treatment. Always consult a qualified healthcare professional for clinical decision-making. Rivara Health provides documentation tools — clinical judgement remains with the treating physician.

Conclusion

AI in critical care documentation is not a future possibility — it is a present reality. From automated discharge summaries that compress hours of manual work into minutes, to severity scoring algorithms that eliminate calculation errors, to OCR systems that digitise paper records, the technology is mature enough for thoughtful clinical deployment.

The ICU teams that benefit most will be those that approach AI documentation as a workflow transformation rather than a technology purchase. Structured data collection, physician oversight, clear governance, and continuous measurement are the foundations of successful implementation.

For ICU clinicians and hospital administrators evaluating these tools, the question is no longer whether AI will transform clinical documentation — but how quickly your institution will adopt it.

Continue reading:

Medical Disclaimer

This article is for informational and educational purposes only. It does not constitute medical advice, diagnosis, or treatment. Always consult a qualified healthcare professional for clinical decision-making. Rivara Health provides documentation tools — clinical judgement remains with the treating physician.

Reduce ICU Documentation Time by 90%

Generate medicolegal-grade ICU discharge summaries in under 2 minutes. Built for Indian hospitals, designed for TPA compliance.